EyeCI-derived gaze features, reliability checks, and browser-first workflows.

CK Zhang · Student researcher

Gaze and cognition tools that hold up outside the lab. HCI engineered for everyday use.

I build webcam-based gaze workflows that still hold up once they leave controlled demos and land in clinics, classrooms, and homes.

Work — Gaze & cognition

Gaze & cognition projects.

Webcam-based workflows for screening, experiments, and clinics.

Journey — Gaze pipeline

Gaze pipeline

How the eye-tracking stack evolved.

-

1

EyePy — First research sprint on affordable gaze tracking

Built EyePy during a research program: a webcam-based eye-tracking prototype using dlib and low-cost webcams.

-

2

EyeTrax — Open-source webcam gaze toolkit

Turned the EyePy ideas into EyeTrax, a reusable library with calibration flows, smoothing filters, and an overlay tool built on MediaPipe landmarks so others could use the gaze pipeline without rewriting it.

Sample gaze trace from the EyeTrax overlay tool.

-

3

EyeCI — Webcam cognitive screening research

Built EyeCI on top of EyeTrax: designed tasks, ran early webcam sessions, cleaned the data, and trained a model that turns gaze traces into screening probabilities with reliability checks.

-

4

NeuroSight — umbrella for clinic and ASD research workflows

Includes Project Argus for clinic screening workflows and Project Iris for ASD-focused research with Xunfen Biotech.

NeuroSight

NeuroSight.

One shared gaze pipeline, then two distinct products: Project Argus for clinical screening and Project Iris for structured ASD-oriented research capture.

Validated on 2,095 webcam sessions for outpatient and community screening.

Co-developed with Xunfen Biotech around a structured research flow that combines questionnaire context and eye-movement capture.

Project Argus

Five-minute screening support for outpatient clinics and community screenings.

Browser-based intake designed to survive lighting changes, ordinary laptops, and the pacing of a real waiting room.

- Focus: deployment reliability under real lighting and device variability.

- Validation: tracked session-level failure modes such as occlusion, drift, and head pose.

Project Iris

Structured ASD-focused research capture with parent context and guided gaze collection.

Project Iris keeps family and staff setup light while pairing questionnaire metadata with a consistent eye-movement collection sequence in collaboration with Xunfen Biotech.

- Focus: capture stable signals while keeping setup burden low for families and staff.

- Data stream: questionnaire metadata paired with guided webcam gaze collection.

Other things I’ve built.

Side projects and experiments in chronological order.

Mistake.nvim put autocorrect directly inside my notes.

I moved my diaries into Neovim and wanted spelling correction that stayed quiet and fast. Mistake.nvim started as a personal plugin and slowly turned into something other people installed too.

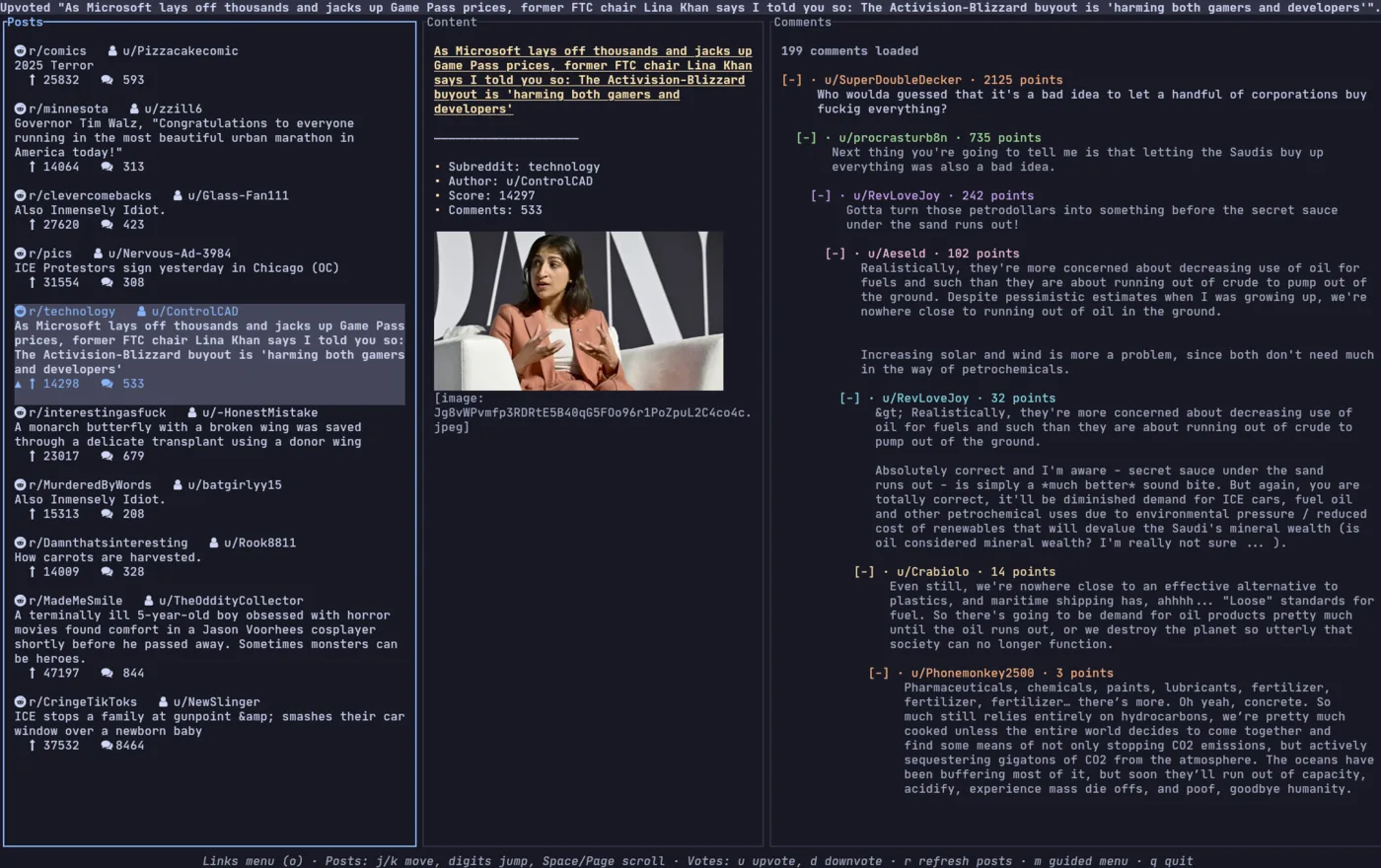

Role: BuilderReddix let the browser fall away and the terminal become the interface.

I built Reddix so Reddit could live in the same terminal pane as my tools. It quickly became my playground for Rust async pipelines, caching, and release discipline.

Role: Solo builder

About

About.

I’m CK (Kevin) Zhang, a student who likes building around gaze, cognition, and interfaces where biomedical engineering meets everyday devices.

I care about tools that can survive crowded clinics and shared classrooms without asking people to change how they behave. That’s why I’ve stayed with the same thread from EyePy to EyeTrax, EyeCI, and now NeuroSight, which includes Project Argus and Project Iris with Xunfen Biotech: keep the hardware cheap, use the signals well, and make the interface feel as close to “just look” as possible.

Long-term, I want to keep working on gaze and cognition tools within biomedical engineering and HCI.

Contact

Contact.

If you’re working on gaze, cognition tools, terminal UIs, or related projects, I’m happy to chat or share what I’ve tried so far.

Email: ck.zhang26@gmail.com

GitHub: github.com/ck-zhang